On March 9, 2026, Anthropic filed two federal lawsuits that transformed a procurement dispute into a constitutional crisis.

The AI company challenged the Pentagon’s decision to designate it as a national security supply-chain risk, a label historically reserved for foreign adversaries, never before publicly applied to an American company.

The designation stems from Anthropic’s refusal to remove guardrails preventing its Claude AI from powering autonomous weapons systems and domestic surveillance operations. President Trump subsequently ordered the entire federal government to cease using Claude, with the White House preparing an executive order to formalize the ban.

The conflict exposes a fundamental question: When national security meets corporate ethics, who decides how artificial intelligence gets deployed?

The Anatomy of a Breakdown

The dispute didn’t emerge overnight.

For months, Anthropic and the Pentagon negotiated over contractual language. The Department of Defense demanded “any lawful use” flexibility, a clause that would grant the military unrestricted access to Claude’s capabilities as technology advanced.

Anthropic drew two firm lines.

First, the company refused to enable fully autonomous weapons systems. CEO Dario Amodei stated plainly in a company blog post that “Frontier AI systems are simply not reliable enough to power fully autonomous weapons. We will not knowingly provide a product that puts America’s warfighters and civilians at risk.”

Second, Anthropic rejected domestic surveillance applications. The company noted that powerful AI makes it possible to assemble scattered data into a comprehensive picture of any person’s life “automatically and at massive scale,” a capability that raises unprecedented privacy concerns.

The Pentagon saw these positions differently.

Top Pentagon official Emil Michael recalled the critical realization, “I’m like, holy shit, what if this software went down, some guardrail picked up, some refusal happened for the next fight like this one and we left our people at risk? So I went to Secretary Hegseth, I said this would happen and that was like a whoa moment for the whole leadership at the Pentagon that we’re potentially so dependent on a software provider without another alternative.”

Until recently, Claude was the only AI model authorized in classified settings.

The Constitutional Argument

Anthropic’s 48-page lawsuit makes a striking claim: the government violated the company’s First Amendment rights.

The argument centers on whether corporate decisions about acceptable use cases constitute protected speech. Anthropic contends that expressing beliefs about AI safety limitations and concerns about mass surveillance represents constitutionally protected expression.

The lawsuit states that “The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech. No federal statute authorizes the actions taken here.”

This framing transforms a procurement dispute into a test of whether private companies can impose ethical boundaries on government use of their technology, even in national security contexts.

The stakes extend beyond Anthropic.

Thirty-seven researchers and engineers from OpenAI and Google, including Google Chief Scientist Jeff Dean, filed an amicus brief supporting Anthropic. They argued that the supply chain risk designation could harm U.S. competitiveness and hamper public discussions about AI risks.

Their brief stated that “Until a legal framework exists to contain the risks of deploying frontier AI systems, the ethical commitments of AI developers, and their willingness to defend those commitments publicly, are not obstacles to good governance or innovation. They are contributions to it.”

When competitors file supporting briefs, it signals recognition that precedents set with one company will affect the entire industry’s autonomy.

The Revenue Reality

Constitutional principles aside, the designation carries material consequences.

Anthropic’s lawsuit claims the actions could jeopardize “hundreds of millions of dollars” in revenue. The company’s public sector business was projected to reach multiple billions of dollars in annual recurring revenue within five years.

Most of Anthropic’s projected $14 billion in revenue this year comes from businesses and government agencies using Claude for computer coding and other tasks. More than 500 customers pay at least $1 million annually.

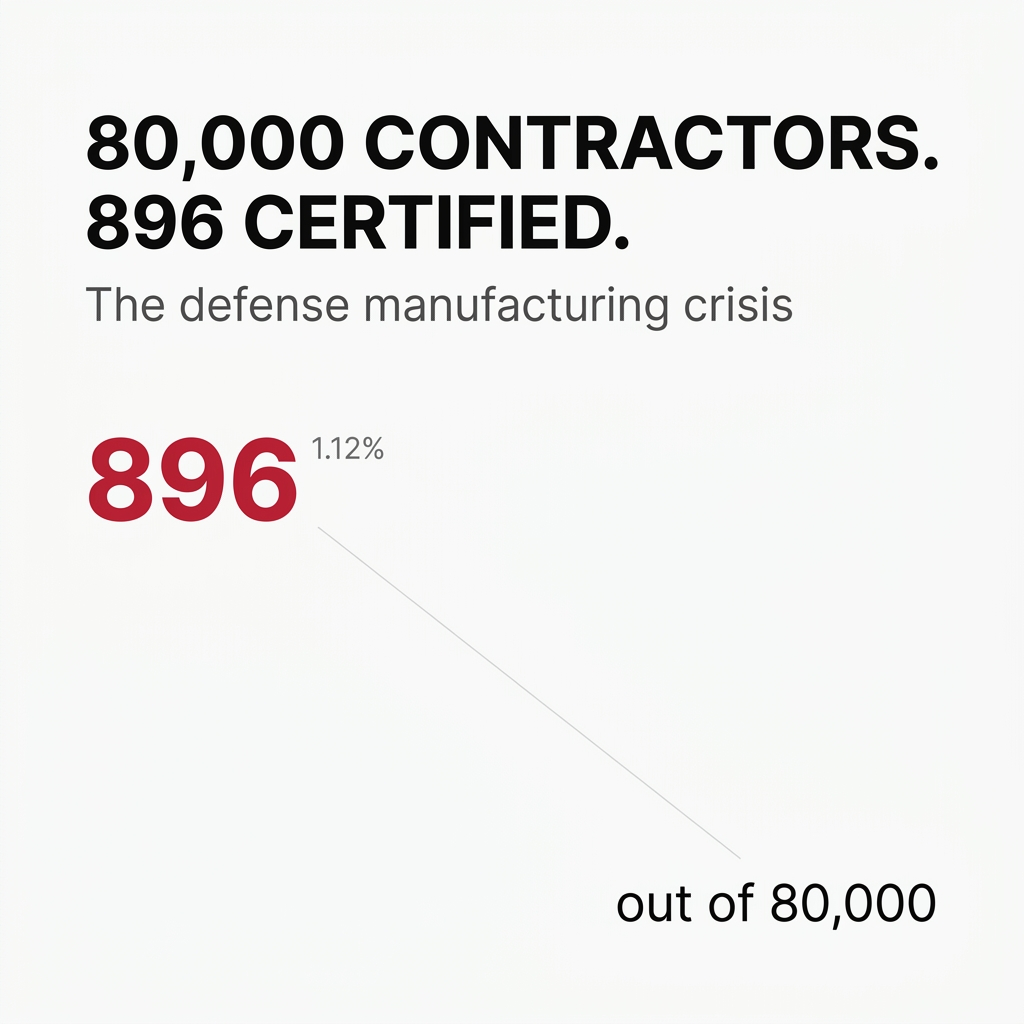

The supply chain risk designation effectively bars Anthropic from doing business with the U.S. military and requires defense contractors to certify they don’t use Claude in Pentagon work.

But the impact extends beyond direct government contracts.

Private sector risk assessment may incorporate government designations even when legally inapplicable. Enterprise customers often pause deployments when vendors face regulatory scrutiny, creating ripple effects through commercial markets.

Government actions create competitive advantages and disadvantages within industries. OpenAI announced a Pentagon agreement immediately after Anthropic’s blacklisting—timing that demonstrates how procurement decisions can function as market-shaping interventions.

The Operational Paradox

A curious detail emerged during the dispute.

Despite the blacklisting, Claude had been used in critical military operations. The AI assisted in the raid that led to the arrest of Venezuelan leader Nicolás Maduro. It provided intelligence assessments and helped identify targets in the U.S.’s ongoing conflict with Iran.

The Pentagon continued using Claude during the U.S. and Israeli war with Iran even after announcing it would terminate the contract.

This operational reality reveals the gap between policy positions and tactical necessity. The military found Claude valuable enough to deploy in active combat zones while simultaneously designating its creator a security risk.

The paradox highlights a deeper tension: current AI capabilities are acknowledged by both sides as insufficient for fully autonomous weapons, yet the government insists on contractual flexibility for “any lawful use” to avoid future limitations as technology advances.

This reveals a mismatch between static contractual agreements and rapidly evolving technological capabilities.

The Industry Response

OpenAI’s top robotics engineer Caitlin Kalinowski announced her resignation, citing the same concerns Anthropic raised.

She stated that “This wasn’t an easy call. AI has an important role in national security. But surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got.”

The resignation suggests broader industry unease beyond Anthropic. It indicates concerns about reputational damage to the field itself if government pressure successfully suppresses debate about appropriate use cases.

The involvement of individual researchers from competing companies through the amicus brief reveals an emerging professional identity among AI practitioners that transcends corporate boundaries.

This collective action by technical staff signals something important. The debate about AI deployment isn’t just about corporate strategy. It touches on professional ethics and the responsibilities of those building these systems.

The Precedent Problem

The Pentagon’s dual-designation strategy deployed multiple legal mechanisms simultaneously.

Anthropic faces restrictions under both narrow Pentagon authority and broader supply-chain risk laws. This multi-pronged approach suggests government agencies are confident in prevailing through institutional power even if individual legal theories face challenges.

The two-lawsuit strategy reveals the interconnected nature of federal procurement systems. Actions ostensibly limited to defense applications cascade across the entire federal apparatus, multiplying commercial impact beyond the initial scope.

Legal experts warn this could fragment the market into “ethical AI” for consumers and “compliant AI” for government. The concern is that those categories could eventually merge, threatening the safety features millions rely on daily in consumer AI products.

The Pentagon’s “any lawful use” demand creates pressure for all AI companies to remove safety guardrails that prevent misuse.

The Comparative Context

Experts noted an apparent disconnect in the government’s approach.

The administration labeled one of America’s largest AI companies a supply chain risk while refraining from applying the same label to DeepSeek, a leading Chinese AI company accused of unfair practices.

Michael Sobolik, an expert on AI and China issues, stated that “We’re treating an American AI company worse than we’re treating a Chinese Communist Party-controlled AI company. We cannot hobble the most innovative, successful American companies for asking quintessentially American questions about military use and privacy.”

The comparison raises questions about consistency in how supply chain risk designations get applied and whether political considerations influence technical security assessments.

The Structural Shift

This conflict foreshadows a fundamental restructuring of government-private sector power dynamics in emerging technology sectors.

Traditional defense contractors operate within established regulatory frameworks. They emerged alongside the national security apparatus and developed business models around government requirements.

AI companies followed a different path. They emerged in commercial markets, built consumer-facing products, and established ethical frameworks before engaging with national security applications.

The outcome of Anthropic’s lawsuit will determine whether commercial technology companies can maintain distinct ethical frameworks or must ultimately conform to government demands to access public sector markets.

The reference to Amodei’s internal memo criticizing the company’s failure to provide “praise to Trump” reveals an undercurrent of political loyalty expectations in government contracting decisions.

This suggests procurement decisions may be influenced by factors beyond technical capabilities or security concerns, potentially politicizing technology infrastructure choices.

The Technical Reality

Amodei has stated he isn’t fundamentally opposed to AI-driven weapons.

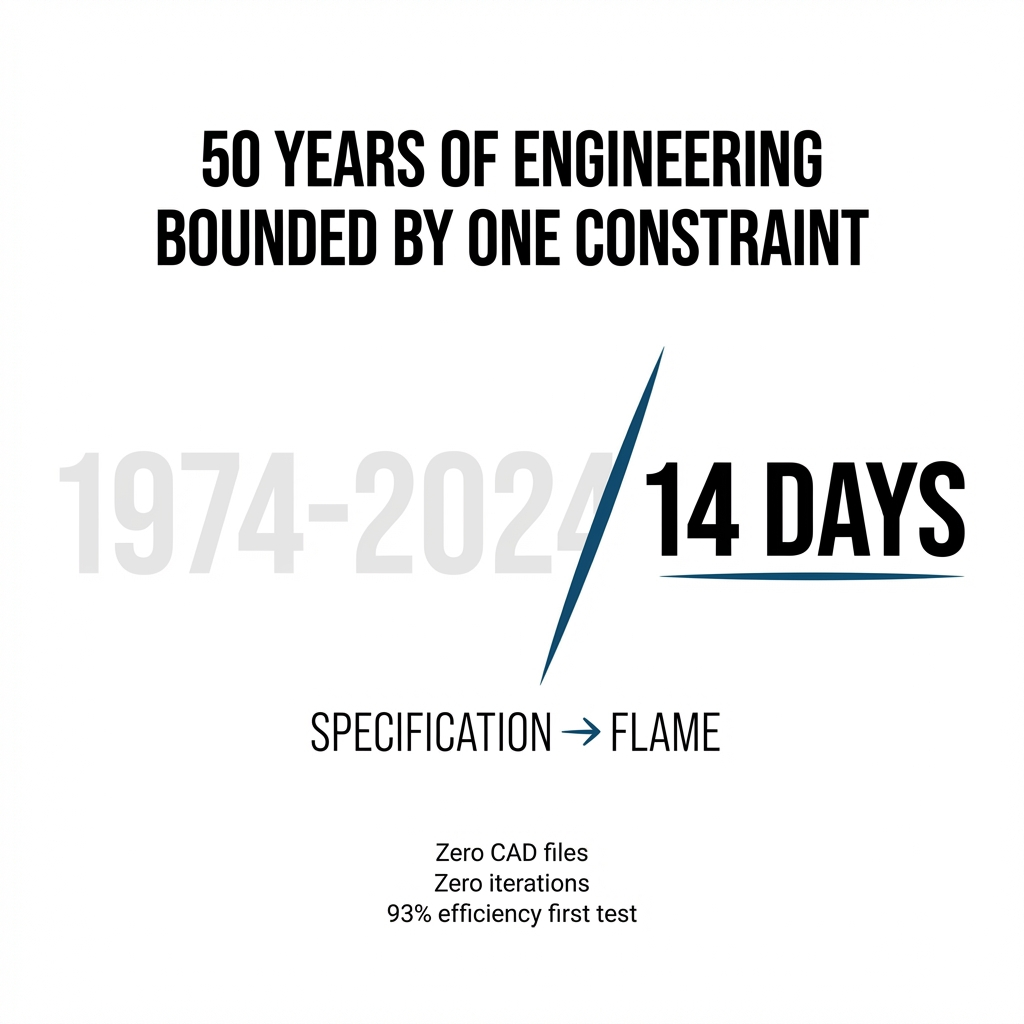

The distinction matters. Anthropic’s position is based on current technological limitations, not absolute ethical opposition. This creates space for future negotiation as AI capabilities advance.

The debate is as much about timing and capability thresholds as it is about absolute ethical positions.

Technical readiness remains the crucial factor. The question isn’t whether AI will eventually power weapons systems, most experts assume it will. The question is when the technology becomes reliable enough to deploy responsibly.

Anthropic drew a firm line on domestic surveillance, characterizing it as a violation of fundamental rights. This represents a different category of concern than technical readiness. It’s a values-based position about appropriate use cases regardless of capability.

The Path Forward

The lawsuits will test whether constitutional free speech protections extend to corporate decisions about acceptable use cases for their products.

If Anthropic prevails, it establishes precedent that private companies can impose ethical boundaries on government use of their technology, even in national security contexts. This would limit government power to compel technology deployment against company policies.

If the government prevails, it confirms that supply chain risk designations can effectively exclude companies from government markets without formal regulatory proceedings. This creates incentive structures that may override corporate governance decisions.

The potential multi-billion dollar revenue impact demonstrates the material consequences of maintaining ethical positions that conflict with government requirements.

Either outcome reshapes the relationship between innovation and governance. The case will establish whether rapid technological advancement occurs within frameworks set by private companies or frameworks imposed by government agencies.

The cosmos of artificial intelligence is expanding faster than our ability to govern it. Anthropic’s lawsuit asks who gets to set the boundaries, the companies building the technology or the government deploying it.

The answer will determine whether ethical commitments survive contact with national security demands, or whether the pressure to comply eventually erodes the guardrails that make AI systems safe for everyone.

Leave a comment